Understanding ChatGPT For Beginners

ChatGPT is making waves, big time! So, it'll be remiss of me not to write a blog on this topic.

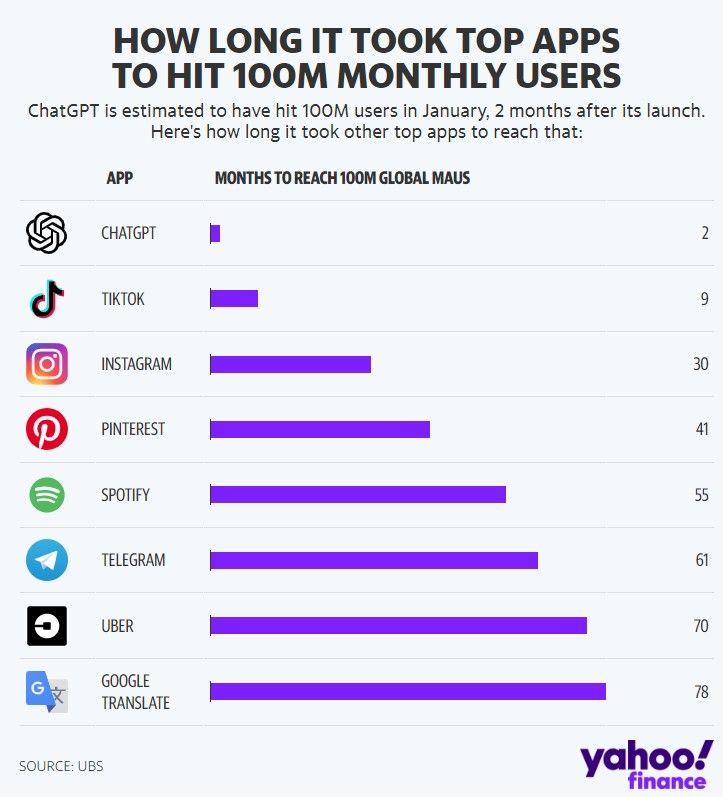

Take a look at this chart by USB I sourced from Yahoo Finance, showing how quickly ChatGPT hit 100 million users – just 2 months, crazy right? It puts other platforms like TikTok and Spotify to shame, which took 9 months and 4.5 years, respectively, to reach the same number.

I feel compelled to take a moment to commend the excellent execution of the chart. It's clear to me that it's crafted by someone who knows their stuff when it comes to charts. The attention to detail and thought gone into this is evident, such as the choice of the chart, why bar and not column, and pre-sorted bar. A good chart minimizes the mental energy the audience requires to process it. If you're into data visualization thing, follow me on Instagram @danni_dan_liu. Data visualization is one of the topics I cover.

Alright, let's come back to ChatGPT. It's pretty clear we all think it's a mega cool tool, but let's face it, it's also a little freaky how smart it is. A day or two ago, I watched this programmer with 20 years of programming experience share how he uses ChatGPT. He's got ChatGPT handling all sorts of tasks, from boring coding bits to helping him with challenging coding problems. ChatGPT even taught him some new tricks! Kind of spooky, right? I mean, when tech gets this smart, it's hard not to worry about our livelihood.

According to a 2023 research paper by OpenAI, "80% of the US workforce could have at least 10% of their work tasks affected by the introduction of GPTs, while around 19% of workers may see at least 50% of their tasks impacted". That's a big deal, but I wouldn't fear it. Call me an optimist, but the way I view GPT and any technology is that it is here to enhance our lives. Sure, roles and tasks will be affected, but it also means we get to do more stimulating things and reclaim time to spend on valuable and meaningful things. If you're scared, I urge you not to succumb to fear. There are two important antidotes to overcoming fear, one is action, and the other is knowledge. In this blog, let's get to know this technology better.

In this blog, I'll cover the following:

- What GPT is and How it Works

- Applications of GPT

- Limitations of GPT

What GPT is and How it Works

GPT is short for Generative Pretrained Transformer and is a type of artificial intelligence language model developed by OpenAI, an artificial intelligence laboratory based in San Francisco. The 'generative' part refers to the model's ability to generate human-like text based on the input it receives.

Before I continue further, in case it's unknown, I want to point out that while ChatGPT and GPT are related, they're not the same. ChatGPT is a specific implementation or version of the GPT model that's designed for generating conversational text. In simple terms, consider GPT like an engine of a car, and ChatGPT is a specific car model that uses that engine. So GPT is the underlying technology, and ChatGPT is a specific application of that technology for the purpose of conversation.

So, how does GPT do its thing? Picture GPT as a student on a mission to binge-read billions of pages from an insanely huge library, with everything from novels and textbooks to newspapers and blogs. As it reads, it begins to grasp the rhythm and flow of words and sentences, gradually picking up language patterns.

Then comes the real test for our student, or GPT in this case. The mission? Predict the next word in a sentence. For example, given a starter like "Once upon a time," GPT has to figure out what the most likely next word should be based on everything it has 'read'. So, it might come up with "Once upon a time, there was..." and so on.

This first part of the process, where GPT gorges on a mountain of internet text, is called "pretraining". Think of it as the equivalent of a student cramming for an exam. The 'exam' here is the varied tasks that GPT is assigned, ranging from answering questions to writing essays.

Once it's done pretraining, GPT moves on to a stage known as "fine-tuning." This stage is like a student practising for a specific subject. For GPT, it trains on a more focused set of data, carefully crafted with the help of human reviewers following OpenAI's guidelines. This helps GPT to whip up safe and useful responses and get better at specific tasks. And just for a fun fact, training ChatGPT costs just under 5 million, according to this source: Lamba Labs

GPT owes its smarts to something known as a transformer neural network architecture. You could see it as the 'brain' of GPT that helps it understand and generate text. This architecture pays attention to each word in the input and its context, deciding which parts of the sentence are vital for predicting the next word.

But here's the kicker, despite all this training and its knack for producing seemingly intelligent text, GPT doesn't truly 'get' the text like we humans do. It's not conscious, it doesn't have beliefs or desires, and it doesn't have a worldly understanding. It's basically a pattern-matching whiz machine, creating sentences based on patterns it picked up during training.

Applications of GPT

Now let's talk about the amazing things GPT can do. There are a ton of applications in production already. I won't be able to list all the applications on the market. However, all the applications on the market would come under one of these four categories:

- Companionship

- Question Answering

- Utility

- Creativity

Let's look at it one by one.

Companionship: This is all about creating AI applications that can carry a conversation just like a human buddy. They can handle casual chats, lend an ear when you need emotional support, or help you brush up your language skills. A good example is Replika, a chatbot that's up for a conversation 24/7.

Question Answering: These applications put GPT to work, providing detailed answers to all sorts of questions. This could be anything from simple facts like, "How tall is the Eiffel Tower?" to more complicated stuff that needs some thought like, "Can you explain quantum physics to me?".

Utility: This category is about applications leveraging GPT to increase productivity or efficiency. Consider Grammarly, which uses AI to enhance its grammar-checking and sentence suggestion features. Also, some coding tools use GPT to generate or suggest code, making a developer's life a whole lot easier. CodeGPT extension for the Visual Studio Code editor is one such tool.

Creativity: Here, GPT gets creative, helping us humans up our creativity game or even generating creative content on its own. An example is Jarvis AI, a writing tool that uses GPT for tasks like marketing copywriting and content idea generation.

Limitations

Although GPT has really impressive capabilities, it also has limitations. Let's look at some of these key ones:

Hallucination: Every now and then, GPT can pop out outputs that are factually off the mark. As OpenAI themselves have stated, "ChatGPT sometimes writes plausible-sounding but incorrect or nonsensical answers". These are called "hallucinations", and they occur because GPT doesn't actually understand the real world or the veracity of the info it's been trained on. It's just generating responses based on patterns it's seen. So, it's important to cross-check any crucial information that GPT churns out with reliable sources. While GPT is a helpful tool for many tasks, it's not a definitive fountain of factual knowledge.

Biases: GPT's training data can sometimes spit out content that mirrors unfair stereotypes. For instance, if you ask ChatGPT about the world's best food, it might serve up 'pizza' as the answer. That's not because pizza is the global champion of food (although some might argue otherwise!), it's because pizza gets a lot of love in the online forums and texts that make up GPT's training data. Basically, it's showing a bias from what it's been fed during training.

Lack of Creativity: Despite GPT having many applications in the creative arena, it doesn't quite hit the mark when it comes to human-like creativity. Sure, if you ask ChatGPT to write a poem, it'll generate something that looks and sounds like a poem. But it's not creating a poem like a human would. Instead, it's kicking out text based on patterns it's picked up during training. So, don't expect it to pen any original or emotionally stirring sonnets anytime soon!

Verbosity: GPT can be a bit of a chatterbox. It tends to over-explain and often repeats certain phrases, like reminding you repeatedly that it's a language model trained by OpenAI. These traits result from biases in its training data, where it's been taught to favour longer, more detailed answers that appear thorough. But while it might be a tad long-winded at times, it certainly gets the job done!

You can check out this paper: Language Models are Few-Shot Learners, by OpenAI, to learn more about its limitation. Warning: it's very technical. A lot of it goes over my head, and it's way too technical for my liking.

And there you have it! The fascinating world of ChatGPT and its underlying technology, GPT. Despite the rapid adoption and the exciting potential it brings to various fields, it's essential to keep in mind its limitations. As with all technology, it's here to aid us; the key is our adaptation. So, whether you're a fan of this AI revolution or a sceptic, remember - knowledge is (potential) power. Embrace this AI evolution as it paves the way to an innovative future.